Comments

-

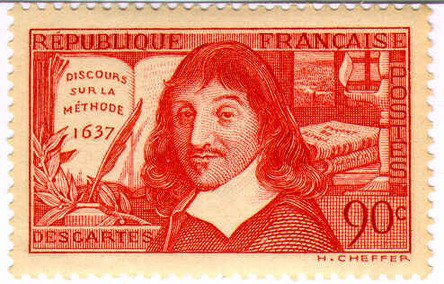

Idealism SimplifiedI guess the famous (or infamous) descarte quote is one of the earliest forms of philosophical idealism...as opposed to visionary idealism, which is a totally different thing. — ProtagoranSocratist

I definitely would say that the Cartesian cogito supports mind-independence (which I have long believed). Although it doesn't inherently imply idealism, I think it works well with Hegel's formulation above, which explicitly does. -

Idealism Simplifiedthe questions were intended to help clarify what you and Hegel mean with the above proposition... — ProtagoranSocratist

As was the answer.

The section from Hegel definitely expands further beyond what I explored. However it very nicely expands the Cartesian cogito in such a way as to render intuitively satisfying the sense of the meaning of idealism. Which was my take. Among other interesting aspects is the contention that this self-recognition is its own, "in the name it rediscovers the fact". Elaborating his contention from another section that "we think in names". The mechanism whereby the particular and the universal are unified in and through intelligence. -

Idealism Simplified

Bear in mind this is an extension of Hegel's reasoning that (I believe) clarifies the core historical problematic of idealism, that it is somehow refuted (or even refutable) by a naive reductive materialism. I would say Hegel's formulation might be that thought is the form in which reality becomes explicit to itself through the mechanism of history. Mine is (I hope) a modern-informed take. -

Banning AI AltogetherComprehension is more important than authenticity.

If AI helps me compose more correctly, why not? — Copernicus

TPF has always seemed more compositional than conversational; AI just exacerbates that quality.

So is philosophy a monologue, or a dialogue? When employed compositionally, and edited intelligently, AI output can seem very human. When employed dialogically, AI quickly shows its true face.

No AIs were consulted in the making of this post. -

Currently ReadingNexus: A Brief History of Information Networks from the Stone Age to AI

by Yuval Noah Harari -

Currently ReadingThe Sociological Imagination

by C. Wright Mills

Dewey's Liberalism and Social Action is an absolutely phenomenal little book on the tension between individualistic liberalism and the embedded-embodied forms and features of socialized intelligence. An optimistic and practical perspective, still very much relevant today as social-commentary. -

Currently ReadingLeft and Right: The Significance of a Political Distinction

by Norberto Bobbio

The biography of Dewey and American Democracy was a long but excellent read. If you aren't familiar with Dewey, it would be phenomenal as a deep introduction to his thought. -

Currently ReadingJohn Dewey and American Democracy: Public Opinion and the Making of American and British Health Policy

by Robert B. Westbrook

Critique of Dialectical Reason, Vol 2

by Jean-Paul Sartre

, -

Currently ReadingFrom a purely business lens, the good thing about an ascetical school is that I imagine it is very cheap to run. All you need is some shacks and daily ration of lentils! Since labor was always a big part of "meditative focus" and the cultivation of humility (often farming, but crafts like basketweaving and ropemaking too), you could maybe even make things self-sustaining to some degree — Count Timothy von Icarus

Well, life has to be ultimately "self-sustaining" - so if your philosophy is truly to be a way of life, then it would have to work in that sense too. On the other hand, communities of thought can have "complex identities," as they come to be shaped by visions and personalities that may not always be completely well-intentioned shall we say. Shared practices can be powerful tools but also dangerous weapons. -

Currently ReadingPhilosophical Introductions: Five Approaches to Communicative Reason

by Jürgen Habermas

philosophy as a practice. I have a lot of ideas about this and maybe I will start a thread on it some day. — Count Timothy von Icarus

:up:

Yes please. Authenticity. -

From the fascist playbookI have always had an unshakeable faith in the hegemony of reason in the universe. I would have thought the spark of which must inevitably lead to morality. But I am beginning to think I was wrong. And it scares me.

-

From the fascist playbookThe scary thing is this has all happened before.

The making of a dictator

Cola di Rienzo assumed power in Rome in 1347. He exploited social discontent and promised to restore the nation's former greatness, utilizing inflammatory speeches, populist rhetoric, and nationalistic appeals. Upon seizing power, he initiated a sweeping purge of the judiciary and bureaucracy, replacing officials with loyalists while undermining established legal norms in the name of reform. His regime increasingly relied on spectacle and personal authority rather than institutional stability, fostering an atmosphere where opposition was branded as treasonous and enemies were ruthlessly persecuted.

Rienzo’s governance became erratic and authoritarian, marked by grandiose proclamations and a growing detachment from practical realities. His foreign policy antagonized powerful neighboring states, provoking conflicts that weakened Rome’s position rather than strengthening it. Internally, he curtailed traditional liberties under the pretext of securing order, employing coercion against those who questioned his authority. -

From the fascist playbookIt strikes me that the idealized concept of capitalism, predicated on free trade and the free market, really only exists its immature state. As it matures, it begins to undermine the very conditions that define it.

The forces that drive capitalism inevitably lead to monopolization and market-manipulation. Capitalism, in maturing, transforms into something fundamentally opposed to its original principles (as Marx thought).

This is horrifically evident, and even more horrifically ignored. The conspiracy of greed runs deep in the human soul. -

From the fascist playbookFurther to the op....from the introduction to Behemoth - which is an analysis of the fascist playbook:

"the Third Reich developed into a “task state,” in which specific goals were entrusted to prized individuals outfitted with special authority in a fashion that cut across bureaucratic domains and the lines of organization charts"

If prized individual with special authority cutting across bureaucratic domains doesn't describe Elon Musk's role then I don't know what does. -

Currently ReadingBehemoth: The Structure and Practice of National Socialism, 1933-1944

by Franz L. Neumann -

From the fascist playbookCapitalist Democracy versus Democratic Capitalism

I am, as I am myself discovering, very much a disciple of John Dewey, a champion of democracy and the foremost pragmatic philosopher and educator of the early twentieth century.

On the antagonistic relationship of modern democracy and modern capitalism, Dewey writes:

"Power today resides in control of the means of production, exchange, publicity, transportation, and communication. Who ever owns them rules the life of the country...by necessity."

Therefore, says Dewey, in order for there to be a true democracy, there must be a change in the direction of control, from "capitalist democracy" to "democratic capitalism." Whence,

"The people will rule when they have power, [when] they own and control the land, the banks, the producing and distributing agencies of the nation. Ravings about Bolshevism, Communism, Socialism are irrelevant to the axiomatic truth of this statement. They come either from...ignorance or from the deliberate desire of those in power...to perpetuate their privilege." -

Between Evil and MonstrosityI'm making an argument that "the moral floor" is sinking, or too low, if you are only required to act in accordance with it. The minimum effort is not enough to attain what the minimum effort aims for, a kind world. — fdrake

That seems true. Morality ought to be melioristic. And in a sense, the whole idea of a moral ought is essentially supererogatory. I can see construing the low bar of duty as what has been recognized as a utilitarian-heuristic. But if that standard of action is not having adequate effect, that is when a new morality is called for. I guess the question is, who will acknowledge the superior moral imperative? -

Between Evil and MonstrosityI would say it is constitutive of the nature of morality that it evolves, a la Jung (Answer to Job) and Kierkegaard (Fear and Trembling). The exemplary which is effected can eventually become the new standard. Some people need to actually see what is possible before they are willing to entertain it. Pace Kierkegaard's "knight of faith," although I would tend to apply a secular-moral gloss. Faith doesn't have to be faith in god; it could be faith in truth, or reason, or good.

-

Between Evil and MonstrosityThe rub I was pointing at is that such actions are necessary to bring it about. — fdrake

I think you could see "duty" as the moral floor, below which we should not sink, whereas the supererogatory is the moral ceiling, towards which we aspire. They are exemplary actions, by definition. People do not have to be exemplary. But they can be. They have that capability. -

Between Evil and MonstrosityBy and large, people who perform supererogatory acts do not do so because ideologically compelled, but from a deep, personal commitment to universal values. So attempting to cast the supererogatory as a kind of duty or compulsion seems inaccurate.

-

From the fascist playbook"There is no democracy with a class of 'over-integrated' haves (who are no longer under the effective control of the law, but control the law) and 'under-integrated' have-nots (who are under the control, but no lunger under the protection of the law).

~Brunkhorst, CTLR, p. 313 -

From the fascist playbook

If you mean how is he enacting the fascist playbook, by

Radically expanding executive powers, attempting to dismantle the division of powers and co-opt judicial regulation. — Pantagruel

If you mean where does lack of critical awareness fit in, in getting him elected. -

Believing in God does not resolve moral conflictsReason is the collective-cumulative product of human interactions, in other words, of social evolution. Which often evolves dialectically, through the juxtaposition of contradictory positions (Hegel).

Critique and negation of norms....must count as a critique of validity claims....the conflict over normative validity is constitutive of social evolution. (Brunkhorst,Critical Theory of Legal Revolutions)

This aligns with my earlier example and explanation, which I think is rather clearer in the context of the OP. To impugn someone's rationality is, by definition, to impugn their beliefs. You cannot make pretense of some sacrosanct faculty called "reason" when normative beliefs are at least as constitutive to the holistic process and project of thought and communication as is reason.

Peirce says that man is a symbol. He is not reducing the meaning of human existence to propositions. Rather, he is expanding and enhancing the dimensions of symbolicity. -

Believing in God does not resolve moral conflictsI would also add, reason cannot be the foundation of morality insofar as reason is itself subject to moral constraints and conditions. A discrete or siloed view of reason and morality does justice to neither.

-

Believing in God does not resolve moral conflictsI think the point to bear in mind is that there is definitely not a consensus that reason operates independently of emotion in the human psyche. There is a holistic thinking process that includes the complete spectrum of human mental states, including logic, emotion, and imagination.

-

Believing in God does not resolve moral conflictsExternal perception on the moral case -> Feelings and Beliefs on the case -> Reasoning -> Moral Judgement. — Corvus

So reasoning is a little black box then? Are you in some sense reducing reasoning to logic? As far as I know, there is no consensus on the nature of reasoning (such as is implied by your axiom) that would allow it to be so neatly distinguished from the elements of morality to allow it to be decisively identified as the basis of morality. -

Believing in God does not resolve moral conflictsjust said, moral judgements must be based on reason. — Corvus

Yes, I know. And as I pointed out, moral judgements, insofar as they may influence actions, which is their entire purpose, cannot be reasonably thought to be solely a function of reason.

Pantagruel

Start FollowingSend a Message

- Other sites we like

- Social media

- Terms of Service

- Sign In

- Created with PlushForums

- © 2026 The Philosophy Forum